Artificial Intelligence in Manufacturing & Supply Chain is transforming how we live, work, and even play. It powers new technologies like self-driving cars, facial recognition, etc., and significantly improves existing technologies like medical diagnostics, search engines and board games like Chess and Go.

The demand and appetite for AI powered technologies are is growing rapidly, yet there is no overwhelming consensus on how we define them.

The Need For a Definition

Our modern lives greatly benefit from the ubiquity of artificial intelligence (AI). They have been positively impacted by technologies such as smartphones, self-driving cars, medical devices, reusable space vehicles, etc.- technologies that are empowered by AI.

While the term gives a hint on what it stands for: a program that mimics intelligence artificially, a more complete definition requires elaboration of intelligence. Typical attributes of human intelligence include:

- cognitive functions like reasoning, learning, ability to apply learning to a new situation/context

- creativity

- problem solving

- perceiving the environment or other entities and interacting with it

Systems that exhibit these attributes are considered to be artificially intelligent. Thus a self-driving car that perceives its environment, understands it is at a traffic light, reasons that it needs to stop there or a voice assistant that understands voice inputs, and replies cogently are examples of artificially intelligence. However, the underlying algorithms that embed the intelligence are numerous and varied. One of those is machine learning.

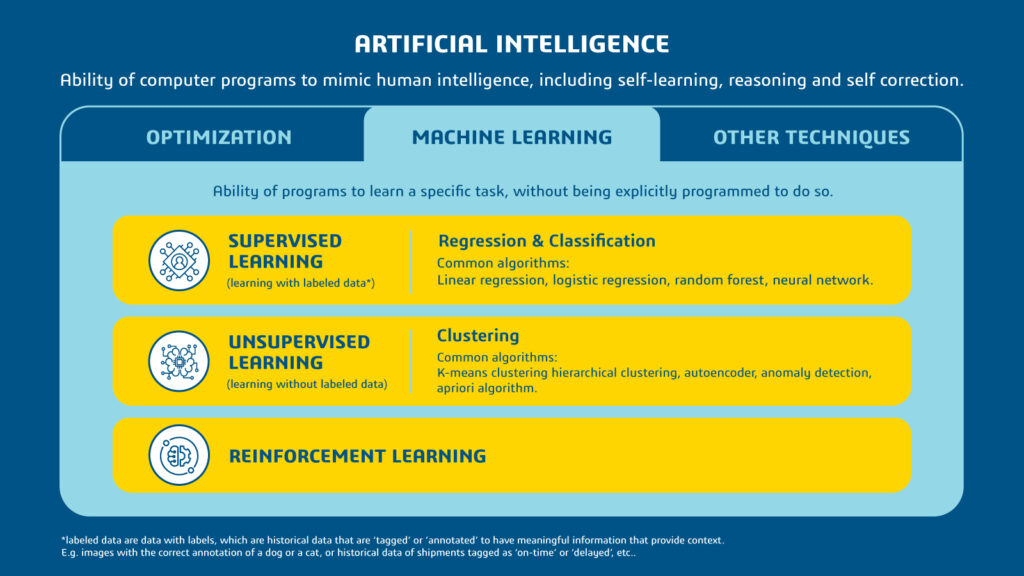

Machine learning (ML) is the ability of a program to learn a task without bring explicitly programmed to do so. ML algorithms detecting patterns and learn how to make predictions and recommendations. Such predictions by ML are usually far superior to traditional methods.

The example above depicts historical data that is being used by a manufacturer to predict a potential delay in order fulfillment. The ML-powered program shows the capability to understand the richer chart pattern and more accurately predict the delay, compared to the traditional method which would have created a simple linear pattern that would have resulted in an inaccurate prediction.

Common Types of Machine Learning

ML algorithms are typically classified as supervised, unsupervised and reinforcement learning- depending on how they learn. Supervised and unsupervised methods learn through data, while reinforcement learns through feedback or ‘reinforcement’.

Exhibit 2: Grand scheme of AI

Supervised learning is achieved through labeled data, i.e. data with labels for an explicit output variable. Labels are historical data that are ‘tagged’ or ‘annotated’ to have meaningful information that provide context. For instance, to learn and predict the expected time of arrival of a flight with certain flight attributes like number of passengers, time and port of departure, etc., the algorithm needs historical data with those flight attributes, with the corresponding status, or labels of those flights being on time or late. Depending on the labels being a continuous number (e.g. expected time of arrival of a flight, life expected rainfall in the next 24 hours, etc.) or discrete classes (e.g. on time/late, accept/reject, cat/dog), they are called regression and classification respectively.

Exhibit 3: Example of supervised learning

Unsupervised learning algorithm explores input data without being given an explicit output variable, and the algorithm to find patterns and classifies the data (e.g. customer segmentation, recommender systems, etc.)

In reinforcement learning, algorithms learns to perform a task by getting feedback or ‘reinforcements’ and trying to maximize the reinforcements it receives for its actions (e.g., maximizes reward it receives for increasing useful life of a machine)